Setup local Spark Cluster on Mac and explore UI for Master, Workers, and Jobs(pyspark)

What is covered

- In this blog, I would describe my experiments about setting up a local (standalone) Spark cluster on Mac M1 machine.

- Would start pyspark with an option to use the master setup in above step.

- Explore the UI for:

- Master

- Worker

- Jobs (transformation and action operations, Directed Acyclic Graph)

Why local (standalone) server

- local server is a simple deployment mode and is possible to run the daemons in a single node

- Jobs submitted using python programs and could be explored using the same UI (would cover in a future blog)

Start the master and worker

- After installation of pyspark (using homebrew), scripts for starting the master and worker were available at the following location:

cd /opt/homebrew/Cellar/apache-spark/3.2.1/libexec/sbin

- start the server (note that would be specific to the machine)

% ./start-master.sh

starting org.apache.spark.deploy.master.Master, logging to /opt/homebrew/Cellar/apache-spark/3.2.1/libexec/logs/<your-local-logfile>.out

- check the log file for the spark master server details ( is obtained from the previous step>

% tail -10 /opt/homebrew/Cellar/apache-spark/3.2.1/libexec/logs/<your-local-logfile>.out

...

22/05/18 07:41:57 INFO Utils: Successfully started service 'sparkMaster' on port 7077.

22/05/18 07:41:57 INFO Master: Starting Spark master at spark://<your-spark-master>:7077

22/05/18 07:41:57 INFO Master: Running Spark version 3.2.1

22/05/18 07:41:57 INFO Utils: Successfully started service 'MasterUI' on port 8080.

...

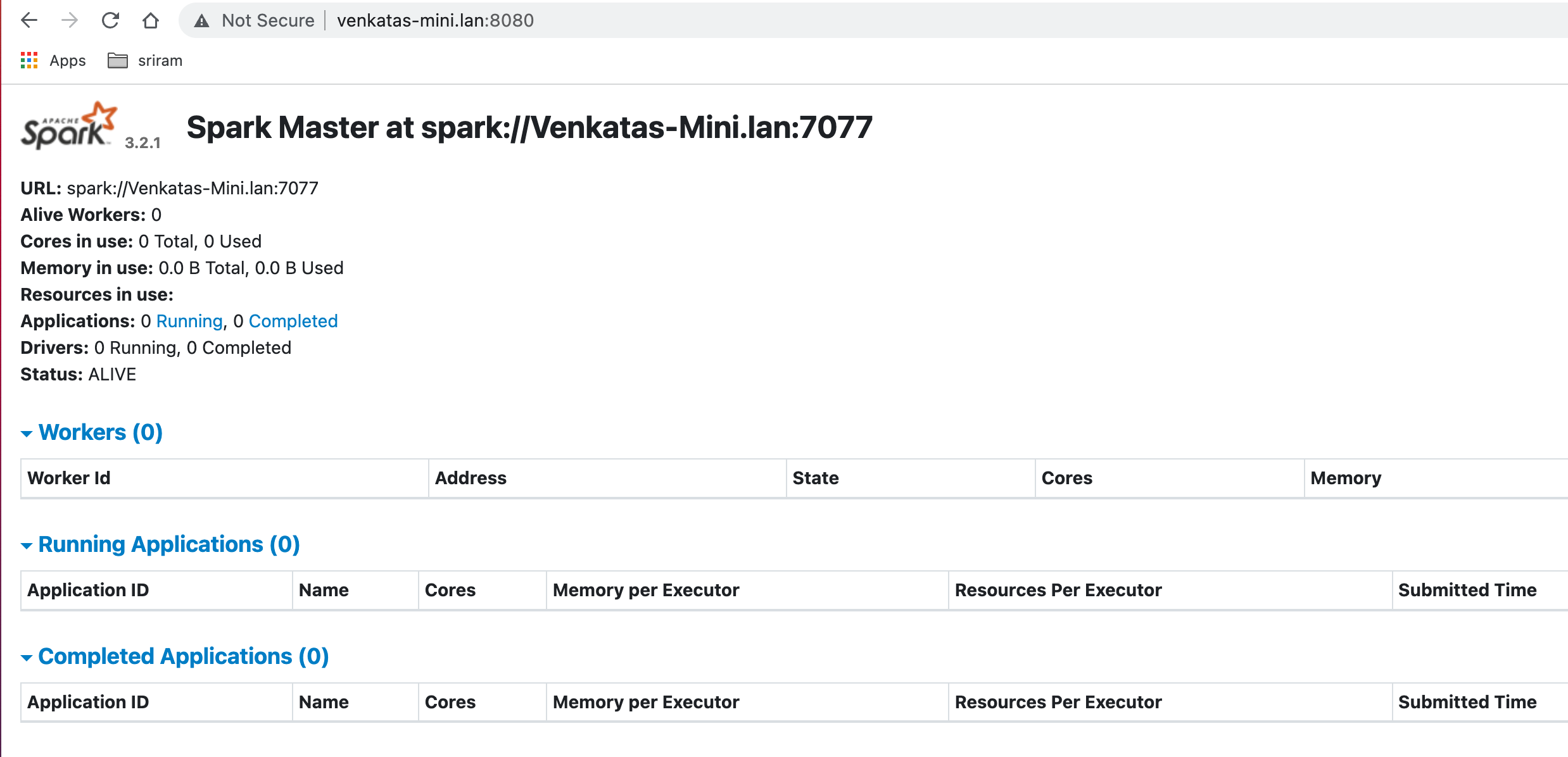

- check the master UI

- notice that workers are zero, since, we have not yet started any

- start the worker by selecting the number of cores and memory based on your system configuration.

- please check logfile in the previous step and replace with the appropriate value for

% ./start-worker.sh --cores 2 --memory 2G spark://<your-spark-master>:7077

starting org.apache.spark.deploy.worker.Worker, logging to /opt/homebrew/Cellar/apache-spark/3.2.1/libexec/logs/<your-local-worker-logfile>.out

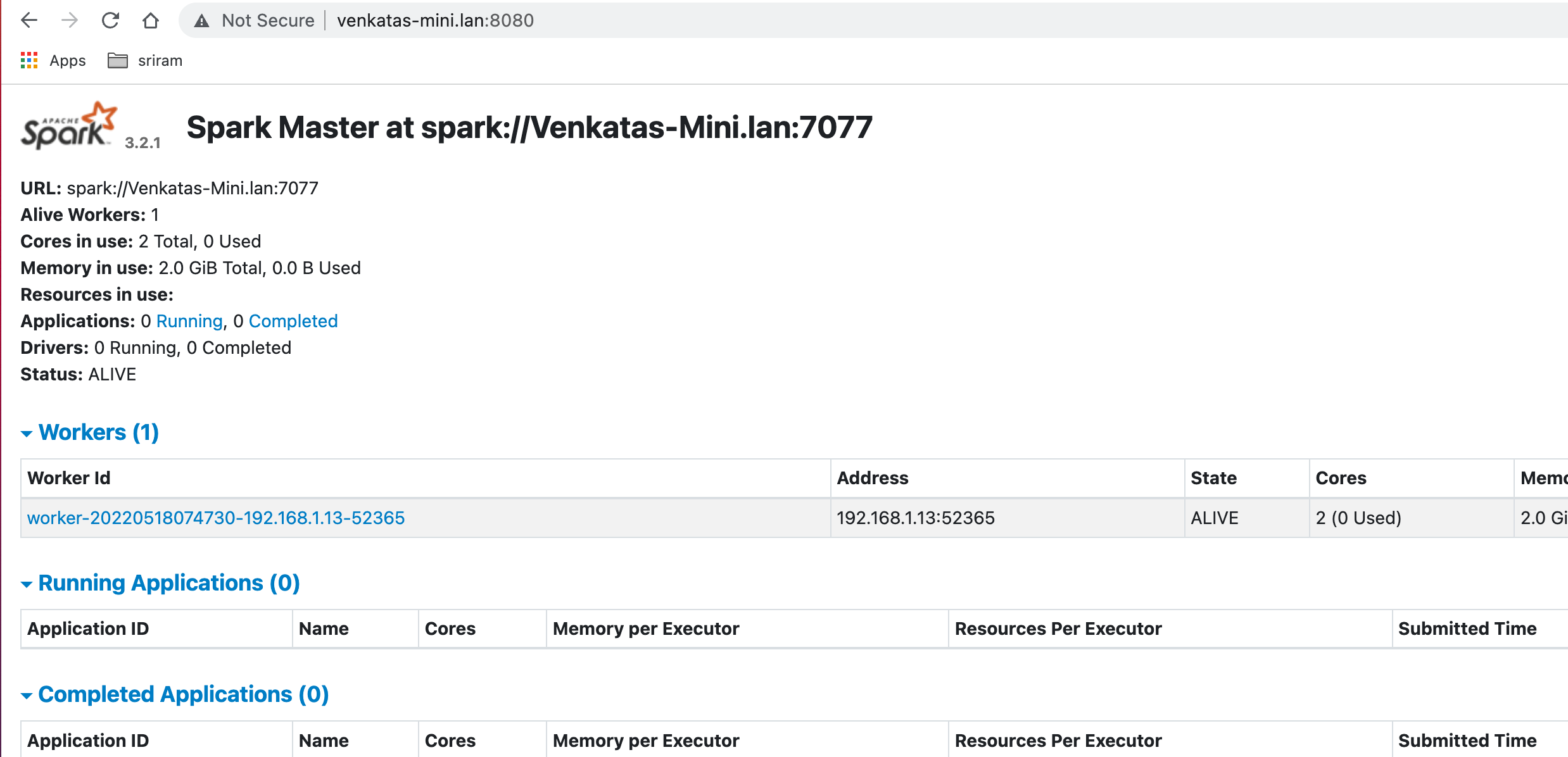

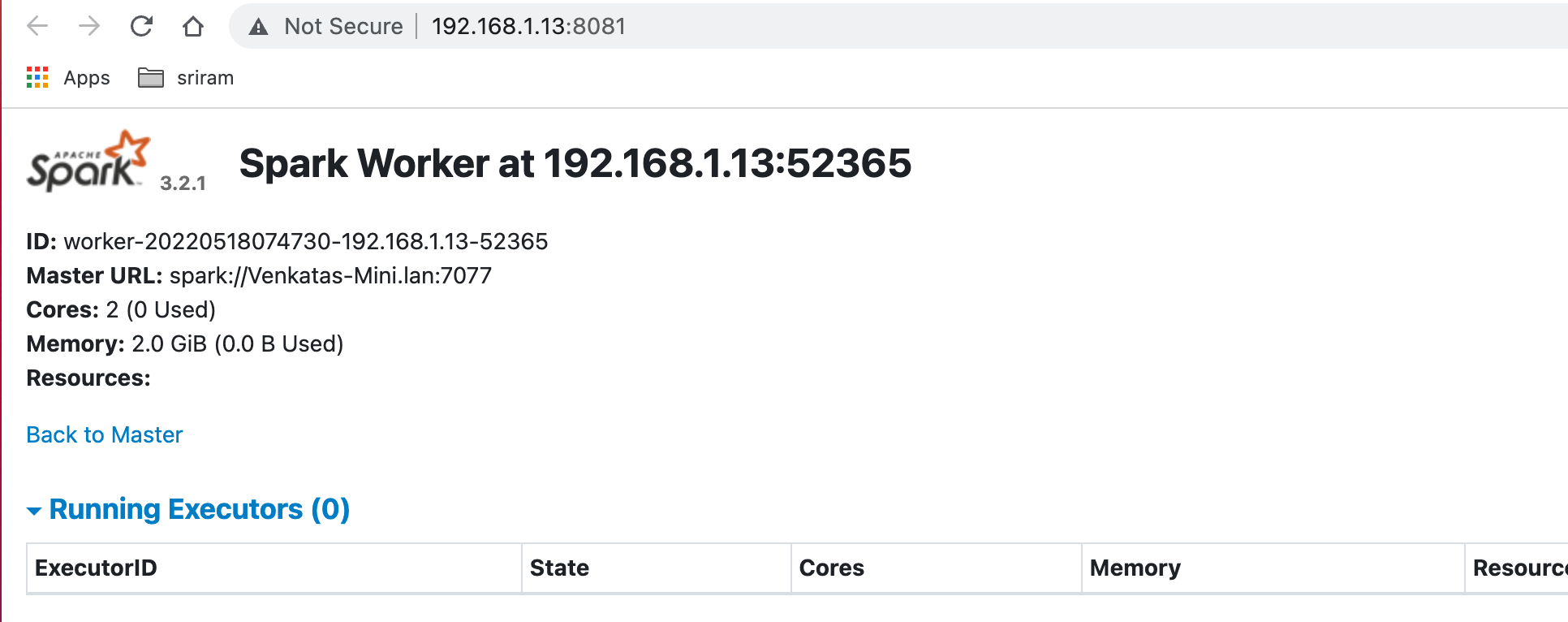

- check the master UI for worker information (now it shows 1 worker)

- also, check the worker UI for the cores and memory allocated

submit jobs using pyspark and observe jobs UI

- we will start pyspark to use the master setup above

- operations are performed on RDDs (Resilient Distributed Dataset)

- spark has two kinds of operations:

- Transformation → operations such as map, filter, join or union that are performed on an RDD that yields a new RDD containing the result

- Action → operations such as reduce, first, count that return a value after running a computation on an RDD

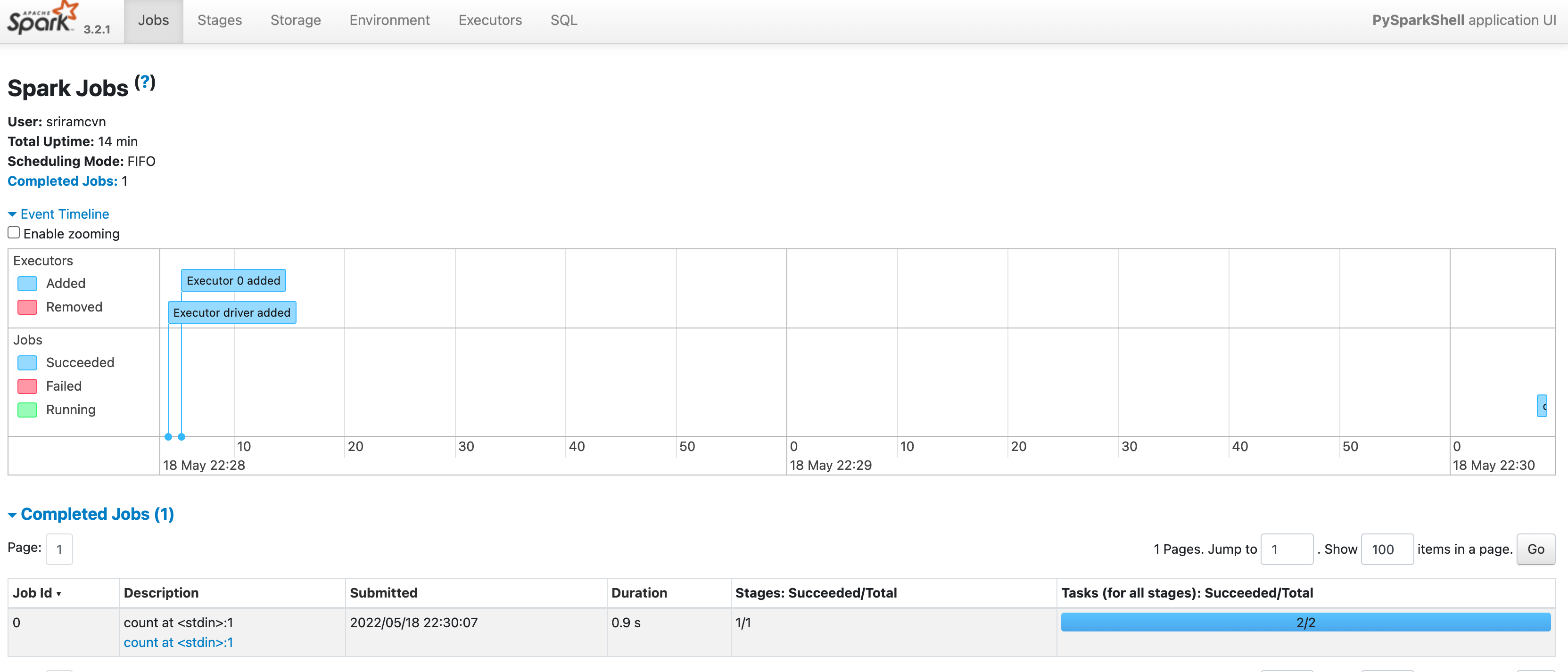

Actions could be displayed as jobs in the UI and transformations could be observed while exploring the DAG (Directed Acyclyic Graph) output

for pyspark to submit jobs to master, start as follows (replace with the appropriate value for :

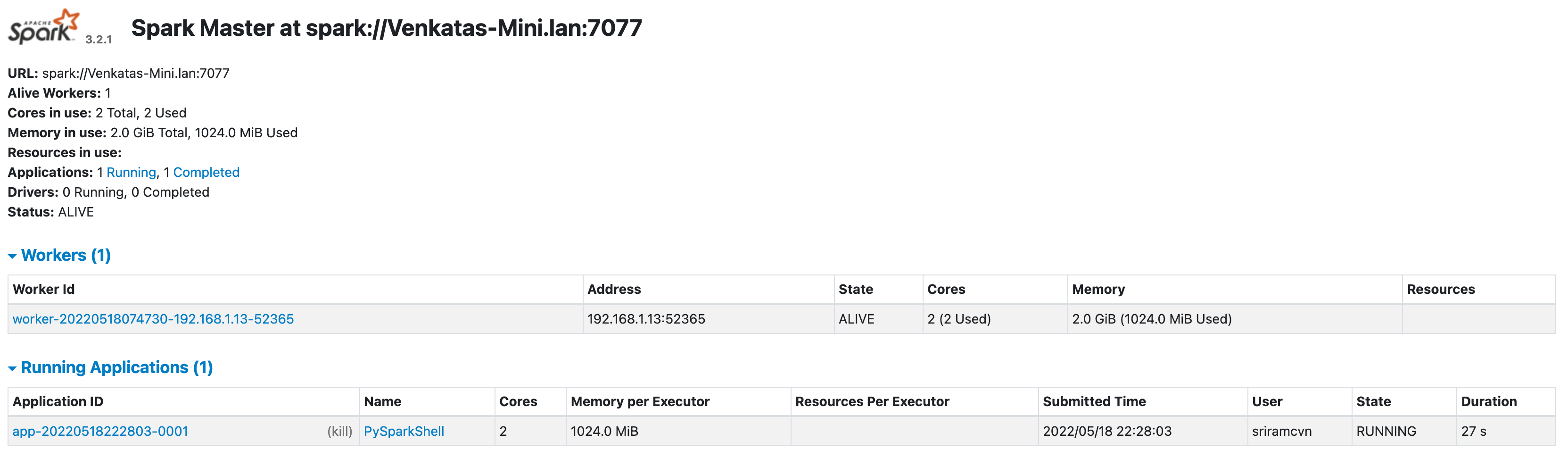

% pyspark --master spark://<your-spark-master>:7077Now observe the master that Application is listed

we will write a simple code in pyspark shell to do one transformation (parallelize) and one action (count) operation

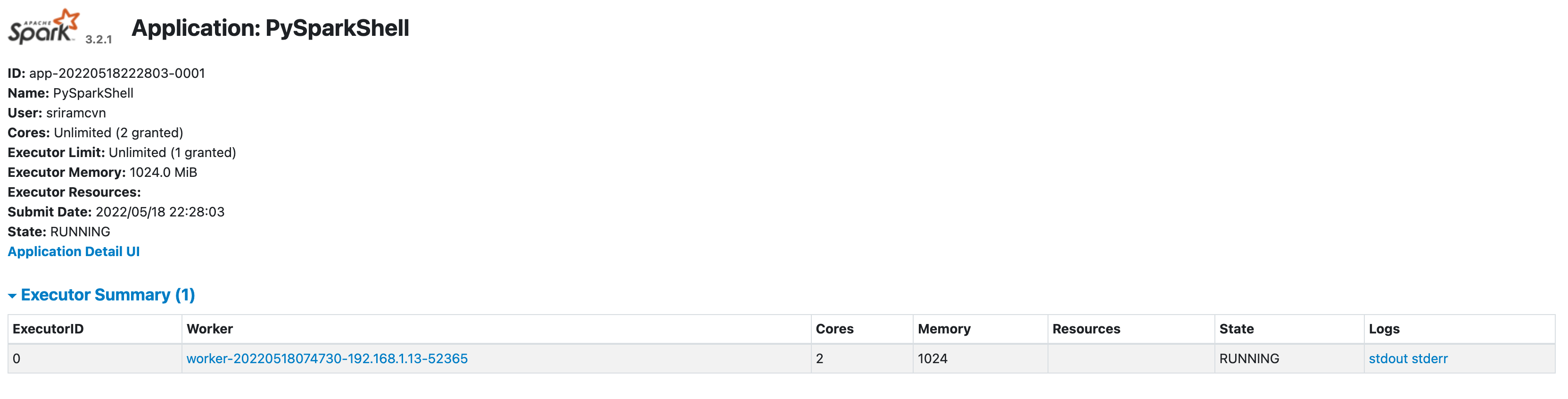

... Using Python version 3.9.7 (default, Sep 16 2021 08:50:36) Spark context Web UI available at http://venkatas-mini.lan:4040 Spark context available as 'sc' (master = spark://Venkatas-Mini.lan:7077, app id = app-20220518222803-0001). SparkSession available as 'spark'. >>> a=("hello","world","pyspark","local","mac","m1") >>> b = sc.parallelize(a) >>> b.count() 6 >>>From master, click on the application to check the application UI:

- In the application ui, click on "Application Detail UI" to get details on the jobs

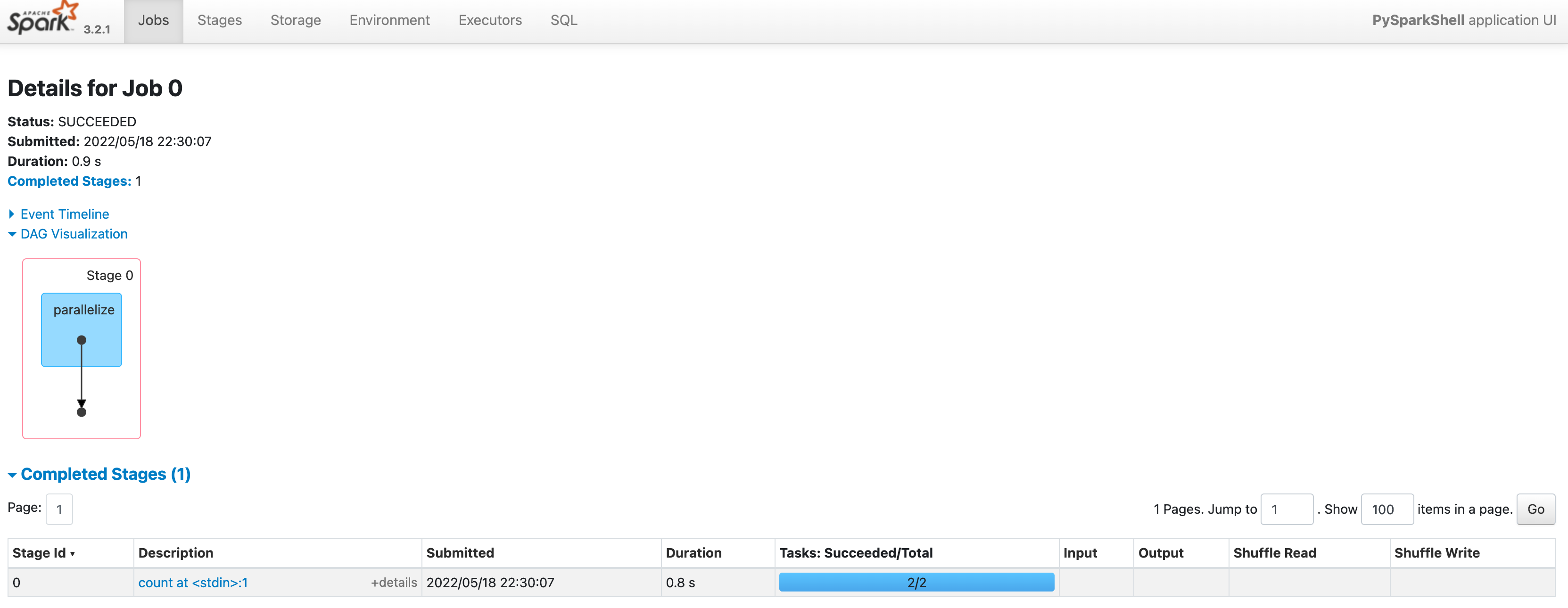

- to see DAG (Directed Acyclic Graph) visualization click on the job (under Description column)

- this is a simple DAG with only one transformation and one operation

Next steps

- submit spark jobs as python programs and check UI for master, worker and jobs

- try out additional spark operations, and actions, and check additional details like storage, etc. in the UI